Mist AI Network Visibility for Scalable Enterprise Networks

How confident are you in what your network is telling you right now?

AI-driven networks are no longer a distant vision. According to Gartner, by 2026, over 30% of enterprises will automate more than half of their network activities, up from less than 10% in mid-2023.

This shift is not just about efficiency or scale. It’s about the new responsibilities that come with delegating critical decisions to algorithms that learn, adapt, and sometimes surprise even their creators.

CIOs and AI risk officers now find themselves at the intersection of innovation and accountability, where the rules are still being written, and the stakes are rising with every new deployment.

The challenge is not simply to keep up, but to set the pace for responsible, transparent, and resilient AI governance, especially as platforms like Mist AI redefine what’s possible in network management.

This guide covers:

P.S. Turn-key Technologies partners with Juniper Mist AI to help organizations navigate the complexities of AI-driven network governance. Our approach emphasizes practical frameworks, risk management, and compliance strategies tailored to the realities of enterprise networking.

Schedule a meeting to evaluate your AI-driven network governance and ensure your organization is ready for the future of AI-powered connectivity.

| Key Area | What CIOs Need to Know |

|---|---|

| AI Governance Frameworks | NIST, OECD, EU AI Act, and ISO 42001 offer structured approaches for responsible AI use in networks. |

| Risk Management | Address bias, data drift, explainability, and security with continuous monitoring and clear escalation. |

| Mist AI Governance | Requires special attention to automation opacity, vendor transparency, and integration with legacy IT. |

| Metrics & Oversight | Track incident reduction, bias findings, audit cycles, and use explainability tools for accountability. |

| Compliance & AI Regulation | Align with GDPR, EU AI Act, and sector-specific laws; maintain documentation and audit readiness. |

| Building a Governance Program | Cross-functional teams, policy development, lifecycle oversight, and continuous improvement are crucial. |

| Future-Proofing | Monitor regulatory shifts, prepare for next-gen AI, and adapt governance to evolving network realities. |

| Practical Checklist | Inventory assets, set policies, monitor AI, define escalation, and review governance regularly. |

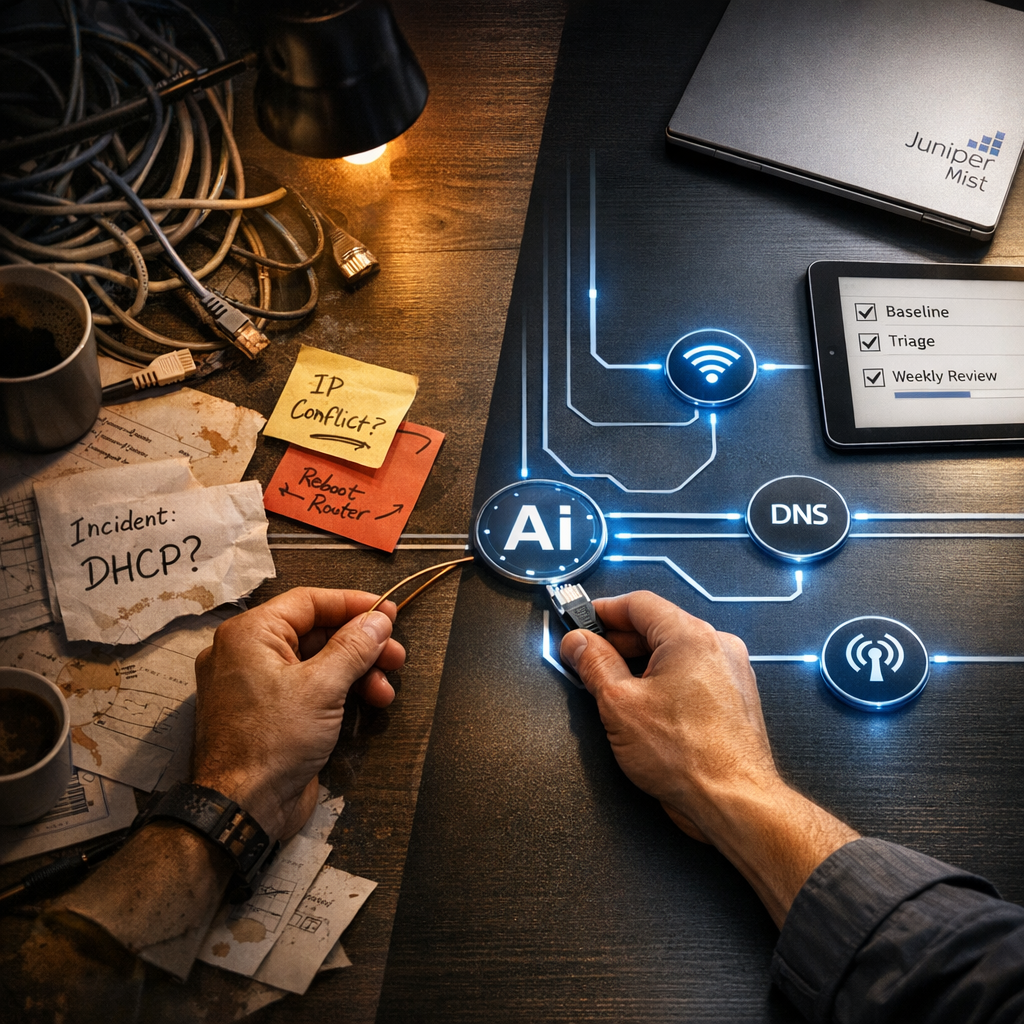

Enterprise networks are transforming as AI-driven automation becomes the new standard for connectivity, performance, and security.

Organizations are leveraging AI to automate configuration, optimize performance, and detect anomalies in real time. Mist AI, for example, brings machine learning and automation to network management, promising fewer outages and faster incident resolution.

As these technologies become more deeply embedded, CIOs and risk officers must ensure that oversight and accountability keep pace with the speed and complexity of AI-powered operations. The stakes are high: a misconfigured AI policy can ripple across thousands of endpoints, while opaque algorithms can complicate compliance and accountability.

The era of AI-driven networks demands a new playbook—one that balances innovation with rigorous oversight, and agility with transparency.

The business case for AI in networking is compelling: operational efficiency, proactive security, and adaptive performance. However, these benefits come with new risks and responsibilities. AI systems can amplify biases, make opaque decisions, and introduce vulnerabilities that traditional controls may miss.

Regulatory bodies are responding with new rules and expectations, from the EU AI Act to sector-specific mandates.

For CIOs, the reputational and operational risks of unmanaged AI are real. A single incident, whether a data breach, compliance failure, or unexplained outage, can erode trust and trigger costly investigations. Effective AI governance is not just a compliance exercise. It is a strategic imperative that shapes how organizations innovate, compete, and protect their stakeholders in a world where algorithms increasingly call the shots.

The frameworks that guide AI governance are the backbone of any responsible deployment. NIST AI RMF, OECD AI Principles, the EU AI Act, and ISO 42001 each offer a different lens for managing risk, accountability, and responsible AI use.

Selecting the right framework is not just about compliance—it’s about building a governance foundation that can adapt to new technologies, regulatory shifts, and the unique demands of your network environment.

These frameworks provide a common language for cross-functional teams, clarify roles and responsibilities, and help organizations anticipate and manage the risks that come with AI adoption.

Selecting the right governance framework is foundational for any organization developing and deploying AI-driven networks. Frameworks like NIST AI RMF, OECD AI Principles, the EU AI Act, and ISO 42001 provide structured guidance, but each brings its own focus, strengths, and limitations.

CIOs must evaluate these frameworks not just for compliance, but for their practical fit with network operations and the unique demands of platforms like Mist AI.

| Framework | Scope | Key Principles | Applicability to Networks | Strengths | Limitations |

|---|---|---|---|---|---|

| NIST AI RMF | US, global | Risk management, trustworthiness, transparency | Strong for risk-based network oversight | Practical, adaptable, risk-centric | Less prescriptive on sector-specific controls |

| OECD AI Principles | Global, policy-level | Human-centered values, transparency, robustness | Sets high-level governance expectations | Widely recognized, ethical focus | Lacks technical implementation detail |

| EU AI Act | EU, regulatory | Risk-based, accountability, transparency | Legally binding for EU operations | Enforceable, detailed on risk and compliance | Still evolving, regional focus |

| ISO 42001 | Global, standardization | Management systems, lifecycle, continual review | Aligns with IT/ISMS practices | Integrates with existing management systems | Requires adaptation for network-specific needs |

| Sector Frameworks | Industry-specific | Varies (e.g., healthcare, finance) | Addresses unique sectoral risks | Tailored controls, compliance alignment | Fragmented, may lack AI-specific guidance |

Read Next:

Is Mist AI’s Architecture Built for the Enterprise? A Deep Dive

https://www.turn-keytechnologies.com/blog/mist-ai-enterprise-architecture

A robust AI governance program is built on a coordinated, organization-wide effort that weaves governance into every phase of the AI lifecycle. This approach ensures that responsibility is distributed, escalation paths are clear, and the program can adapt as both technology and regulatory expectations evolve.

Read Next:

How AI-Native Networking Redefines Enterprise Network Strategy

https://www.turn-keytechnologies.com/blog/ai-native-networking-mist

Managing risk in AI-driven networks is a continuous journey, not a destination. As organizations integrate machine learning and automation into their network environments, the risk landscape shifts in ways that traditional IT controls cannot always anticipate.

A thoughtful risk management strategy begins with a clear understanding of where AI is deployed, what data it processes, and which business decisions it influences. Mapping these elements provides a foundation for identifying potential points of failure or exposure. Technical assessments, such as stress testing and adversarial scenario planning, help reveal how AI might behave under pressure or in the face of unexpected data.

These exercises are most effective when paired with input from stakeholders across IT, security, compliance, and business units, since risks often arise at the intersection of technology and human processes.

Mitigation in this context is not a single action but a layered approach. Data governance policies must ensure that training data is accurate, representative, and regularly refreshed to prevent bias or drift.

Ongoing model validation is also essential for catching subtle performance changes before they impact operations. Security controls should be designed to monitor both human and AI-driven actions, with clear boundaries and escalation paths for when automated systems encounter ambiguous or high-risk situations.

Continuous monitoring, powered by automated alerts and real-time analytics, forms the backbone of this approach. These alerts should feed into a well-defined escalation process, ensuring that issues are investigated promptly and lessons are captured for future improvement.

Read Next:

Network Segmentation for Security: Best Practices to Stop Cyberattacks Cold

https://www.turn-keytechnologies.com/blog/network-segmentation-for-security

Oversight in AI-driven networks requires a deliberate, structured approach that combines quantitative metrics, specialized tools, and disciplined processes to ensure that AI systems remain accountable and aligned with organizational goals. CIOs must move beyond intuition and anecdotal evidence, building a governance model that is both measurable and auditable.

Read Next:

Marvis AI Best Practices: A Practical Playbook for Juniper Mist Network Operations

https://www.turn-keytechnologies.com/blog/marvis-ai-limitations

The regulatory environment for AI-driven networks is in constant motion, shaped by new laws, evolving standards, and shifting expectations from regulators and stakeholders. For CIOs, compliance is not a static checklist to be completed once and forgotten. Instead, it is a dynamic process that must keep pace with both technological innovation and regulatory change. The consequences of falling behind are significant: non-compliance can lead to financial penalties, reputational damage, and operational disruptions that ripple across the organization.

To stay ahead, organizations need to embed compliance into the core of their AI governance practices. This starts with meticulous documentation—capturing not just the technical details of AI system design and data flows, but also the rationale behind key decisions and the steps taken to address incidents.

Such documentation serves a dual purpose: it provides evidence for regulators during audits and supports internal learning by making it easier to review and refine governance processes over time.

Maintaining compliance also requires ongoing engagement with legal and compliance teams, who can interpret new regulations and advise on necessary policy updates. Scheduling regular policy reviews, with clear triggers based on changes in law, technology, or business context, helps ensure that governance remains current and effective.

Proactive communication with regulators and industry peers can offer early insights into emerging trends and best practices, allowing organizations to adapt before new requirements become mandatory. Ultimately, a strong compliance posture is built on transparency, adaptability, and a willingness to invest in the people, processes, and tools that make responsible AI possible.

Mist AI brings a unique set of governance challenges that require CIOs to rethink traditional oversight models. Its real-time automation, advanced analytics, and integration with legacy systems create a dynamic environment where decisions are made at machine speed and often without direct human intervention.

The complexity of Mist AI’s algorithms and the pace of its updates can make it difficult to maintain visibility, enforce policies, and ensure accountability. Addressing these challenges demands a governance approach that is both rigorous and adaptable, capable of bridging the gap between technical innovation and organizational control.

Read Next:

Juniper Mist AI Strategy: The Shift to AI-Native Network Operations

https://www.turn-keytechnologies.com/blog/mist-ai-network-strategy

Operationalizing Mist AI governance requires a clear, actionable checklist that guides CIOs and risk officers through each critical step. This checklist should be revisited regularly and adapted as the network environment and regulatory landscape change.

Read Next:

Marvis AI Best Practices: A Practical Playbook for Juniper Mist Network Operations

https://www.turn-keytechnologies.com/blog/marvis-ai-limitations

Governing AI-driven networks is a dynamic challenge that demands both strategic vision and operational discipline. CIOs and risk officers who embrace robust frameworks, practical oversight, and continuous adaptation will position their organizations for success in the AI era.

Turn-key Technologies brings deep expertise in Mist AI governance, helping organizations design, implement, and optimize oversight programs that balance innovation with accountability. Our partnership with Juniper Mist AI ensures that clients benefit from proven frameworks, practical tools, and ongoing support as AI and network technologies evolve.

Schedule a meeting to evaluate your AI-driven network governance and ensure your organization is ready for the future of AI-powered connectivity.

NIST AI RMF, OECD AI Principles, the EU AI Act, and ISO 42001 are the most widely recognized frameworks for AI governance. Each offers a different perspective, from risk management to ethical principles and regulatory compliance. CIOs should select frameworks that align with their operational context, regulatory obligations, and the specific demands of AI-driven network environments.

Responsible AI use starts with clear policies, cross-functional oversight, and continuous monitoring. CIOs should establish governance teams, document decision-making processes, and use explainability tools to ensure transparency. Regular audits and stakeholder engagement help maintain accountability and adapt to evolving risks.

Key risks include algorithmic bias, data drift, model opacity, and security vulnerabilities. Managing these risks requires robust data governance, regular model validation, layered AI security controls, and automated monitoring. Escalation protocols and incident response plans ensure that issues are addressed promptly and effectively.

Mist AI can be governed using general frameworks like NIST AI RMF and ISO 42001, but its unique features—such as real-time automation and vendor-managed updates—require tailored controls. CIOs should work closely with vendors, document integration points, and adapt governance practices to Mist AI’s operational realities.

Effective governance relies on metrics such as incident reduction rates, bias findings, and audit cycles. Platforms include governance tools, monitoring dashboards, explainability solutions, and automated alerting systems. These enable CIOs to track performance, detect issues, and demonstrate compliance.

Future-proofing involves monitoring regulatory changes, preparing for next-gen AI technologies, and fostering a culture of continuous improvement. CIOs should update policies regularly, invest in skills development, and engage with trusted partners to stay ahead of emerging risks and opportunities.

How confident are you in what your network is telling you right now?

AI is showing up in network operations everywhere, and the teams seeing real results treat it like a program. As an IT Director, you’re balancing two...

In 2025, 87% of organizations reported experiencing an AI-driven cyberattack in the past year, and the number of reported AI-enabled cyber incidents...